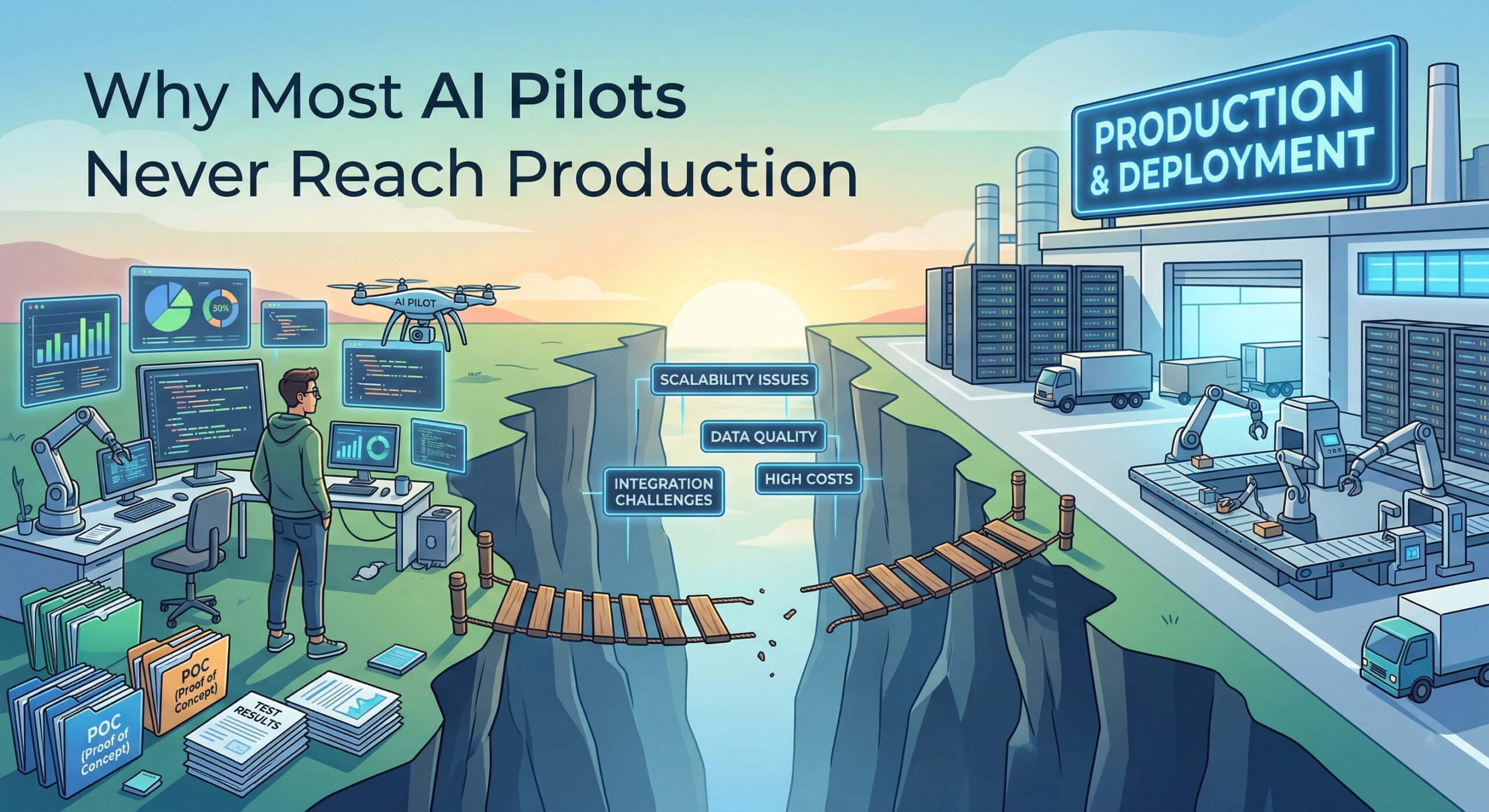

If you’ve ever watched an AI initiative quietly die between quarterly reviews, you’re not alone. Across industries, companies are launching AI pilots — and most of them are stalling. Not because the technology doesn’t work, but because the structure around the pilot isn’t built to succeed. The result is a pattern so common it’s earned a name: pilot purgatory.

Table of Contents

The Numbers Are Harder to Ignore Than You Think

Industry data from 2026 paints a stark picture. For every 33 AI pilots a company launches, roughly 4 graduate to actual production deployment — a 12% success rate. According to Gartner (August 2025), 40% of enterprise applications are expected to feature task-specific AI agents by end of 2026 — up from less than 5% at the start of 2025. That means the pressure to run AI pilots is intensifying at the exact same time failure patterns are becoming clearer. The gap between “we’re piloting AI” and “we’re running AI in production” is significant — and entirely preventable.

It’s Not a Technology Problem

Here’s the thing most teams miss: AI pilots don’t fail because the models underperform. They fail because of structural gaps that were present before the first line of code was written.

Research from early 2026 consistently points to the same root causes:

- 73% of failed AI pilots lacked clearly defined success metrics before launch

- 56% lost executive sponsorship within the first six months

- Generative AI pilots frequently face infrastructure costs running 3–5× original projections at scale

- Mid-sized companies with 10 to 250 employees fail at disproportionately higher rates — they inherit enterprise expectations without enterprise infrastructure

What teams often miss is that the problem isn’t the AI — it’s the absence of a formal go/no-go framework. Without one, pilots drift.

Recognizing Pilot Purgatory

Pilot purgatory looks harmless from the outside. The project is “in progress.” Leadership receives regular check-ins. Demos look promising. But months pass and nothing ships.

Here are the warning signs worth watching for:

- The definition of success keeps shifting to match whatever the AI did well this week

- No one can articulate a clear deployment date or formal trigger condition

- The team is still “cleaning data” well past month two

- Stakeholders are cautiously supportive but not actively accountable

- The pilot team has grown in size — but production readiness conversations haven’t started

The deeper problem is organizational, not technical. Without a hard deadline, scope expands. Without predefined KPIs, retrospective justification fills the void. Without committed executive sponsorship, budget and priority quietly erode.

Why Time-Boxing Changes the Dynamic

A sprint-based AI pilot operates under a different logic. The premise is straightforward: if you can’t demonstrate meaningful value in four to six weeks on a well-scoped use case, you need to either sharpen the scope or change the use case — not extend the timeline.

Time-boxing creates several things an open-ended pilot simply cannot provide:

- Decisional urgency: Teams focus on what matters because there’s a hard stop date.

- Predefined KPIs: Success is defined before development begins, not after results come in.

- Faster learning cycles: Six weeks of focused effort generates more actionable signal than six months of unfocused progress.

- Bounded cost exposure: If a sprint doesn’t validate the hypothesis, the cost is weeks of effort — not eleven months and millions in write-offs.

The structural difference between the two approaches matters at every stage:

| Dimension | Open-Ended Pilot | Sprint-Based Pilot |

|---|---|---|

| Timeline | Indefinite | 4–6 weeks |

| Success criteria | Often defined post-hoc | Defined on Day 1 |

| Decision trigger | Unclear | Formal go/no-go gate |

| Scope | Tends to expand | Constrained to one use case |

| Cost exposure | High and unpredictable | Controlled and bounded |

| Production readiness | Often an afterthought | Built into the sprint from Week 1 |

Four Things to Lock In Before Your Sprint Starts

Most AI pilots that fail never addressed these four questions before work began. In reality, answering them upfront is what separates a sprint that ships from one that stalls.

- What business outcome are we validating? Not “can the AI do X” — but “will this reduce invoice processing time by 40%?” Specific, measurable, tied to a real operational target.

- Is our data accessible and usable today? Data quality issues discovered in month two are project killers. Run a data readiness assessment before the sprint begins — not during it.

- Who owns the go/no-go decision — and are they committed? Projects with sustained C-suite sponsorship succeed at 68%. Those that lose executive support succeed at 11%.

- What does a failed sprint mean — and what will we do with that result? A sprint that doesn’t validate the hypothesis isn’t a failure. It’s six weeks of evidence pointing toward a better decision, not six months of sunk cost.

What to Carry Into Your Next AI Initiative

The companies running AI successfully in 2026 aren’t necessarily better resourced or more technically sophisticated than their peers. They’re more structured. They treat AI pilots the way experienced product teams treat sprint cycles — bounded, hypothesis-driven, and accountable to real outcomes.

A few principles worth holding onto:

- Start with one well-scoped, high-impact use case — not a multi-department transformation

- Write the success criteria before writing a single workflow or prompt

- Conduct a data and process readiness audit before the sprint begins

- Assign a named executive sponsor who will honor the go/no-go gate

- Keep the sprint to six weeks maximum — if value isn’t demonstrable by then, adjust scope, not timelines

If you’re planning your first AI pilot or trying to understand why a previous one lost momentum, a structured AI Pilot Sprint — with clearly defined scope, measurable success criteria, and experienced delivery support — can give you real answers in weeks, not quarters. The question isn’t whether to start. It’s whether to start with the right structure in place.

Frequently Asked Questions

AI Agents & Automation

Smart autonomous agents for workflow automation, task execution, and real-time actions.